Marking AI-Generated Content: A Technical Guide for Providers Under the AI Act

Generative AI providers: understand AI Act Article 50(2) requirements for machine-readable content marking, multi-layered marking, detection, and compliance strategies to avoid €15M fines.

Key Takeaways

- AI Act Article 50(2) mandates that generative AI providers mark synthetic audio, image, video, and text outputs in a machine-readable format.

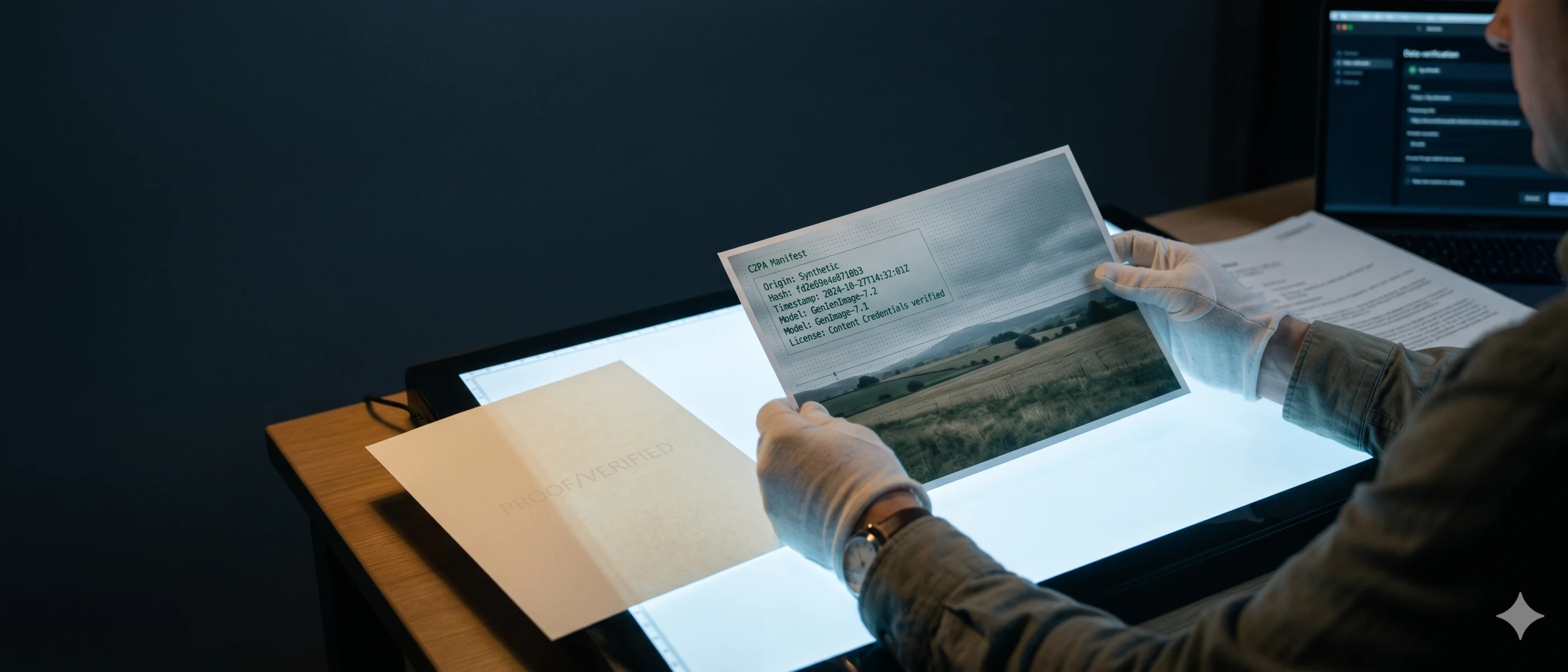

- The draft Code of Practice recommends a multi-layered technical marking approach typically combining digitally signed metadata and imperceptible watermarking. Industry tools such as C2PA-based provenance solutions or SynthID may serve as implementation examples.

- Marking by providers is a structural, machine-readable obligation that is legally distinct from the human-visible AI-generated content labeling required of deployers.

- Text generation systems are exempt from marking requirements if they perform purely assistive editing functions or do not substantially alter input data.

- Non-compliance with Article 50 transparency obligations risks fines of up to €15 million or 3% of global annual turnover, with enforcement beginning August 2, 2026.

1. General Overview of Article 50(2) AI Act

Compliance with European generative AI transparency rules begins at the model level. As the technical foundations of generative AI come under regulatory scrutiny, providers must implement structural safeguards to ensure their outputs can be identified across the digital ecosystem.

1.1 Scope and purpose

Under AI Act Art. 50(2), providers of AI systems that generate synthetic audio, image, video, or text outputs are legally required to ensure these outputs are marked in a machine-readable format and detectable as artificially generated. The purpose of this obligation is to combat disinformation and enable downstream identification of synthetic media. Crucially, the requirement contains a specific technical exemption: the marking obligation for text content does not apply to AI systems that perform assistive functions for standard editing (such as grammar checkers) or to systems that do not substantially alter the input data.

1.2 The provider’s role under Article 50(2)

It is critical to distinguish between the technical duties of a provider and the disclosure duties of a deployer. Under Article 50(2), the provider's responsibility is purely technical: you must build machine-readable marking directly into the generation pipeline. This ensures the provenance signal travels natively with the output file. By contrast, human-visible AI-generated content labeling typically falls under Article 50(4), which obligates the deployer (the entity deploying the system to end-users) to prominently disclose that the content is AI-generated.

1.3 Why the Code of Practice matters

While the AI Act lays down the binding legal obligations, the second draft Code of Practice on marking and labelling of AI-generated content (the “Draft Code”) provides a practical and voluntary framework for implementing Article 50(2), whereas the European Commission’s forthcoming guidelines are expected to clarify the scope of application, relevant definitions, exceptions, and other horizontal issues under Article 50. Providers who successfully implement the standards finalized in these Codes benefit from a formal "presumption of conformity." This limits regulatory liability and provides a legally defensible standard if your synthetic content detection mechanisms are challenged.

2. Marking of AI-Generated Content

To fulfill Article 50(2) obligations, providers must design a marking architecture that survives the complex journey from API generation to endpoint rendering.

2.1 Understanding the multi-layered marking approach

Regulators explicitly recognize that no single marking technique is infallible. Consequently, the draft Code recommends a "multi-layered marking approach." This strategy leverages redundancy by embedding origin signals in multiple formats simultaneously. The first layer typically consists of cryptographically secured metadata attached to the file container. The second layer relies on imperceptible digital watermarking embedded directly into the pixel, audio, or video payload itself, ensuring the mark persists even if the file container is stripped or reformatted.

2.2 What providers should implement in practice

In practice, your engineering teams should adopt industry-standard protocols rather than proprietary marking schemes. For the metadata layer, you may consider widely adopted provenance standards such as C2PA (Coalition for Content Provenance and Authenticity), paired with IPTC metadata properties designating the Digital Source Type as synthetic. For the watermarking layer, you may integrate state-of-the-art libraries such as Google's SynthID, which embeds imperceptible marks into audio and images. For example, OpenAI currently injects C2PA metadata into DALL-E 3 image outputs to cryptographically bind the file to its generative origin. Providers should inject these marks programmatically before the output is returned via API or user interface.

3. Detection of the Marking of AI-Generated Content

Marking synthetic media is only half the regulatory requirement; the markings must also be reliably detectable by downstream platforms, researchers, and end-users.

3.1 Understanding detection and external verification

Synthetic content detection relies on external verification ecosystems. Article 50(2) requires outputs to be "detectable as artificially generated or manipulated." This means the markings you embed cannot rely on a closed-loop system accessible only to your internal engineers. Intermediaries, such as social media platforms or news organizations, must have the technical capability to scan, read, and verify the machine-readable marks to subsequently trigger user-facing labeling interfaces.

3.2 What providers should implement in practice

Providers should expose dedicated APIs or publish public cryptographic keys that allow third parties to verify provenance signatures natively. If you utilize C2PA, the validation tools are inherently decentralized, allowing any compatible reader to verify the manifest. If you employ custom or advanced neural watermarks, you are expected to provide an open-source or highly accessible detection tool or endpoint. Do not obscure the detection mechanism behind exorbitant paywalls, as this contradicts the transparency intent of the AI Act.

4. Quality Requirements for Marking and Detection

To meet regulatory standards, marking implementations must pass a rigorous assessment balancing technical capability against operational reality.

4.1 Understanding effectiveness, reliability, robustness and interoperability

Article 50(2) mandates that technological solutions for marking and detection must be effective, reliable, robust, and interoperable "as far as technically feasible."

- Effectiveness & Reliability: The mark must consistently appear in outputs and accurately reflect the system's origin without false positives.

- Robustness: The signal must survive common manipulations, such as lossy compression (JPEG/MP3), cropping, color adjustments, or deliberate attempts by malicious actors to strip metadata.

- Interoperability: The formats used must align with global standards (like C2PA) to ensure cross-platform compatibility.

4.2 How providers can meet these requirements in practice

Engineering leaders must manage the trade-offs between robustness and technical feasibility. Highly robust neural watermarks require additional compute overhead, increasing generation latency and infrastructure costs. Providers should benchmark their marking systems against common adversarial attacks (e.g., screenshotting an image or re-encoding audio). Document these benchmarks meticulously. If a highly robust watermark introduces unacceptable latency (making real-time video generation technically unfeasible), rely heavily on robust metadata standards and document this architectural decision to prove you have pushed the limits of current "technical feasibility."

5. Testing, Verification and Compliance

With transparency rules carrying heavy enforcement penalties, generative AI compliance must transition from an engineering objective to an auditable organizational process.

5.1 Understanding testing and compliance obligations

Generative AI compliance under the AI Act is strictly enforced. Violations of Article 50 transparency obligations carry severe administrative fines of up to €15,000,000 or 3% of your total worldwide annual turnover, whichever is higher. These obligations become legally binding on August 2, 2026. Prior to this date, regulators expect providers to engage in extensive red-teaming and adversarial testing of their marking systems to ensure they meet the quality thresholds defined in the forthcoming Code of Practice.

5.2 How providers should organise compliance in practice

Establish a cross-functional working group comprising AI researchers, legal counsel, and product managers immediately. This team must audit all existing generative pipelines (audio, image, video, and text) against the draft Code. Develop automated testing suites that deliberately attempt to strip your watermarks and metadata from outputs, recording the success rate to measure robustness. Finally, formalize your intent to adopt the final Code of Practice by June 2026, ensuring your models secure the presumption of conformity well ahead of the August 2026 enforcement deadline.

About the author

Junzhe Dai

Junzhe Dai is a PhD candidate at the Faculty of Law, Humboldt University of Berlin. His research focuses on data market regulation, data protection law, and AI governance, with particular interest in the GDPR, the AI Act, the Data Act, and comparative analyses of EU and Chinese digital regulatory frameworks.

Need help with compliance?

Book a free 30-minute call to review your GDPR and EU AI Act readiness.